This post is part of the Shipping Intelligence series—2 of 5. Start from the beginning.

At a certain shipping volume, data stops being the problem. You have carrier performance reports. You have cost breakdowns by service level and region. You have dashboards that track delivery outcomes across your network. The information exists.

What doesn’t exist—at least not reliably—is the time and bandwidth to turn that information into a decision before conditions shift again.

For operations teams managing thousands of shipments a month, this is where the real work happens. Not in gathering data, but in making sense of it fast enough to act.

The analysis bottleneck

Most conversations about shipping performance focus on the data itself—how accurate it is, how current it is, how complete it is. Those are legitimate concerns, but for teams at scale, the more common problem is the sheer volume of shipping data.

When you’re processing thousands of shipments a month across multiple carriers, service levels, and regions, the amount of performance data generated is significant. Carrier on-time rates vary by lane. Costs shift with zone and weight. Regional disruptions create temporary performance gaps that look like trends if you don’t catch them early enough.

Making sense of all of that requires someone to pull the data, organize it, identify what’s signal versus noise, and produce a recommendation, ideally before the underlying conditions have already changed. In many operations teams, that process takes days. Sometimes longer.

By the time the analysis is complete, the window to act on it has often closed.

What benchmarking actually tells you

One of the more underused tools in shipping analytics is benchmarking against similar shippers operating at comparable volume and complexity.

Internal benchmarking is useful. Knowing that your on-time delivery rate improved from one quarter to the next tells you something. But it doesn’t tell you whether that improvement is keeping pace with what’s achievable, or whether your carrier mix is performing the way it should relative to shippers in similar lanes with similar volumes.

External benchmarking answers a different set of questions: Are your carrier costs in line with what comparable shippers are paying? Is your delivery accuracy where it should be given your volume and geography? Are the performance gaps you’re seeing specific to your operation, or are they carrier-wide issues that other shippers are navigating too?

That context changes how you respond. A carrier underperformance issue that looks like a local problem might turn out to be a network-wide pattern. A cost trend that seems inevitable might be well above what comparable shippers are experiencing. Without external benchmarks, you’re optimizing in the dark.

According to EasyPost platform data, customers who gain that kind of visibility see a 23% increase in delivery accuracy. That result comes from consistently catching and correcting the small performance gaps that add up over time.

The case for simulating before you commit

Carrier decisions are expensive to get wrong. Adding a new carrier to your mix requires integration work, operational adjustment, and a period of performance uncertainty before you know whether the move paid off. Adjusting service levels across high-volume lanes can shift costs and delivery outcomes in ways that are difficult to predict from rate cards alone.

Most teams make these decisions based on available data and informed judgment. That works until the variables involved outpace what judgment alone can reliably account for.

Simulation changes the math. Before committing to a carrier change or a service level adjustment, you can model the expected impact on cost, delivery time, and reliability using your actual shipping data. Not hypothetical averages. Your lanes, your volumes, your performance history.

The difference in practice is significant. Instead of asking “what do we think will happen if we add this carrier,” you can ask “what does our data suggest will happen”—and get an answer that accounts for the specific conditions of your operation rather than an industry generalization.

That’s not a small thing when the decision affects thousands of shipments a month.

From data to decision

The common thread across analytics, benchmarking, and simulation is speed. More specifically, it’s the speed at which data becomes a decision rather than a report.

For operations teams at scale, that speed is constrained by the manual work required to move between them. Pulling performance data, comparing it against benchmarks, modeling a potential change, and translating the output into a recommendation is a multi-step process that takes time your operation may not have.

The teams pulling ahead aren’t necessarily working with better data than their peers. They’re working with the same data, faster. They’ve built or adopted systems that compress the cycle from observation to action, and that compression shows up directly in cost and delivery performance.

If your team is spending more time analyzing shipping performance than acting on it, that’s the gap worth closing first.

More in the Shipping Intelligence series

- Part 1: Shipping Costs Are a Decision Problem, Not a Pricing Problem

- Part 2: Your Shipping Data Has the Answer. The Problem Is Finding It in Time.

- Part 3: What Would You Ask If You Had a Shipping Expert on Call? (coming soon)

- Part 4: Every Shipment Is a Decision. Most Teams Are Still Making It Manually (coming soon)

- Part 5: When the Problem Isn’t Your Shipping Data — It’s Where It Lives (coming soon)

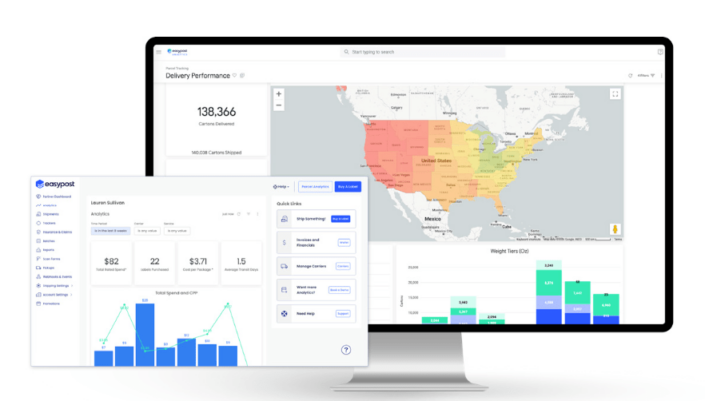

See what's really happening across your shipping network

Luma AI Insights gives operations teams real-time visibility, peer benchmarking, and simulation tools built on data from over a billion shipments, so analysis leads to action, not more reports.